Property Analysis: Mortgage Data

1 Mortgage Data

Most people who buy houses need mortgages, unfortunately. Fortuntately for me, we have a (reasonably good) source of information in this on the BPFI website.

The data goes back to 2011 (so not quite as much as our house price data), and is collected at a monthly cadence. It appears to be most of the high-street banks in ireland, and is only for mortgages on residential properties.

It comes in an Excel sheet. Whoever released this seemed to value the aesthetic principles of the dataset in preference to usability in computer programs. Given that the primary audience seems to be journalists, this probably makes sense.

I did some artisanal data cleaning 1 on the file, and imported all the data as per below.

require(readxl) require(tidyverse) mortgage_sheets <- excel_sheets( "Website-mortgage-approvals-time-series-Mar2020.xls") volumes <- read_excel( "Website-mortgage-approvals-time-series-Mar2020.xls", sheet="Volumes", skip=4) names(volumes)[c(1:2, 8)] <- c("year", "month", "total") volumes2 <- normalise_names(volumes) values <- read_excel( "Website-mortgage-approvals-time-series-Mar2020.xls", sheet="Values", skip=4) names(values)[c(1:2, 8)] <- c("year", "month", "total") values2 <- normalise_names(values) av_values <- read_excel("Website-mortgage-approvals-time-series-Sep2018.xls", sheet="Average Value", skip=4) names(av_values)[c(1:2, 8)] <- c("year", "month", "total") av_values2 <- normalise_names(av_values) valtidy <- gather(values2, key="type", value="value", 3:7) %>% mutate(date=lubridate::ymd(paste( year, month, "01", sep="-_"))) voltidy <- gather(volumes, key="type", value="volume", 3:7) %>% mutate(date=lubridate::ymd(paste( year, month, "01", sep="-_"))) av_valtidy <- gather(av_values2, key="type", value="av_value", 3:7) %>% mutate(date=lubridate::ymd(paste( year, month, "01", sep="-_")))

Note the repeated code. Sometimes, a little copying is better than a large dependency. Sometimes people are lazy. Who can ever tell, in this workaday world?

The volumes tab is count, the values tab is in millions (1e6), and the average values tab is (presumably) the first divided by the second. There's also a notes tab, which we don't import 2

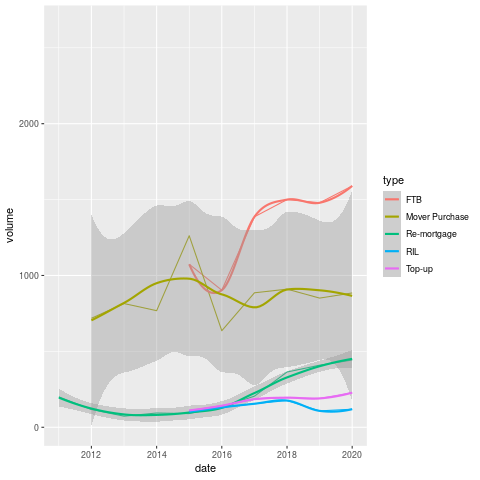

So, below we can see the volumes of mortgage approvals since 2011.

The most striking thing about this graph is the complete absence of first-time buyers between 2011 and 2014. I am tempted to declare it a data mistake, but given the economic conditions it is a little plausible. They may also suppress small numbers in the interests of privacy. It would be good if I figured this out, but I'll leave it for now.

When they do come back, they come back all at once, with near level FTB and traders-up. If this pattern held for the entirity of the boom, no wonder that things have gotten so messed up since.

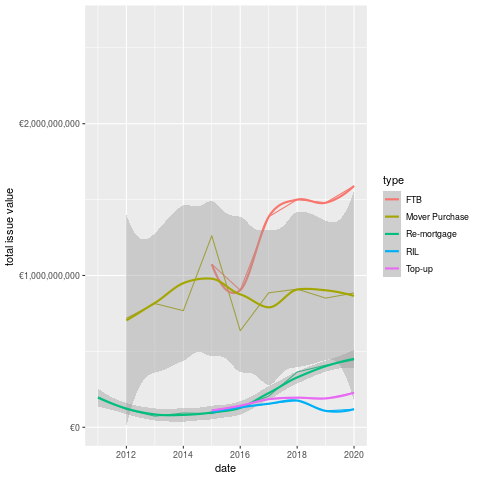

Next, we look at the total value of mortgages issued to particular kinds of buyers over the time period.

- So we have been 1-2bn per year for each of the FTB and Mover Purchase categories.

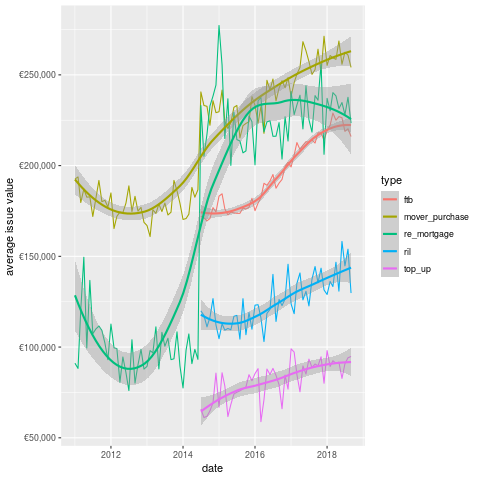

We can see what the average looks like in the plot below.

This chart is actually more interesting than either of the previous two. We can see that the average value given to FTB is significantly less than that for Mover Purchase. In some sense, this is weird as one would expect the movers to need less of a mortgage, given the existence of their previous property.

Another point that is obvious from the plot is the re-appearence of remortgaging in terms of value in 2014. Perhaps not coincidentally, the state emerged from the bailout program in December 2013 (paywall, but they have a few free articles).

Did the recovery in property prices encourage people to re-mortgage? Did the banks encourage these (presumably wealthy) borrowers? it's difficult to estbalish this from the data.

Additionally, given that I want to buy a house in Dublin, I'm not sure how useful the overall figures are. Unfortunately, this is all we have, which means we probably need more data (don't worry, we'll come back to the mortgage data eventually 3).

Next stop, the CSO!

1.1 Residential Prices by Month, Type of Property (HPM06)

The CSO have this arcane windows only application that people are supposed to use to get data. Being on Linux, I was SOL until some kind soul wrote code to access their database through an API.

They use a weird-ass format called JSON-Stat, which I know little about, but fortunately I can just call a function and get the data back as a dataframe.

source("rcso/read_api.R") prices <- getJSONstat("HPM06")

head(prices)

| type_of_residential_property | month | statistic | value |

|---|---|---|---|

| National - all residential properties | 2005-01-01 | Residential Property Price Index (Base Jan 2005 = 100) | 100 |

| National - all residential properties | 2005-01-01 | Percentage Change over 1 month for Residential Property Price Index (%) | nil |

| National - all residential properties | 2005-01-01 | Percentage Change over 3 months for Residential Property Price Index (%) | nil |

| National - all residential properties | 2005-01-01 | Percentage Change over 12 months for Residential Property Price Index (%) | nil |

| National - all residential properties | 2005-02-01 | Residential Property Price Index (Base Jan 2005 = 100) | 100.8 |

| National - all residential properties | 2005-02-01 | Percentage Change over 1 month for Residential Property Price Index (%) | 0.8 |

- So the type variable tells us what we are measuring, the statistic defines what the value means.

- This is awkward data to work with, but tidying it up should help.

We'll keep type in the rows, but move all the statistics into their own columns, to facilitate plotting.

prices2 <- spread(prices, key="statistic", value="value") prices3 <- normalise_names(prices2)

The names are still really long, but at least they don't have spaces anymore.

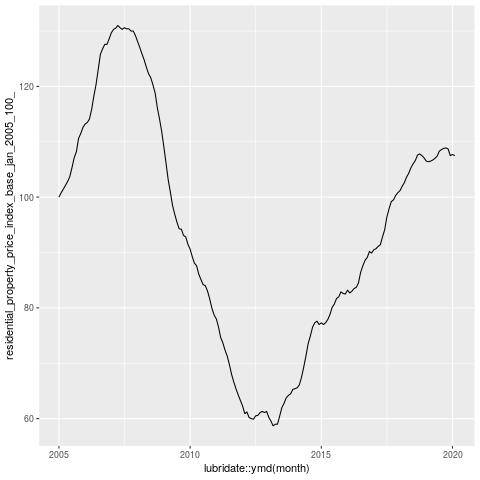

The variable we care about here is the index. This is normalised to 2005, at 100, so we can interpret the graph as the change since then.

Wow, so overall we're in 2005 again. I remember telling people not to buy houses around then. Mind you I was just bitter because no-one would give me a mortgage 4.

This kinda scares the hell out of me, but I suspect that the numbers of houses being sold today are a fraction of those sold during the boom.

1.2 New Property Prices

Wow, this is mental. House prices have basically only gone down a lot once, and that was the boom. This may be why many Irish people regard property as such a good asset. Additionally, we're now seeing ZIRP and QE inject large sums of money into the economy, which has pumped up asset prices 5.

On the other hand, Dublin looked pretty mental in 2015 (when this datasource stops). Note that this is only for new properties, which is skewed by the scarcity. I probably need to get some counts to normalise.

Additionally, I need to look at population flows over the time periods concerned. If I had counts for houses built and available, that would be super useful. Not sure if such a source exists, however.

1.3 Pulling it All Together

- So what have we learned from today's excursions?

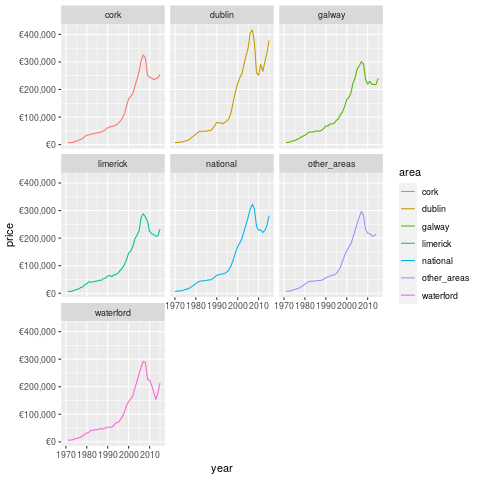

Firstly, we reviewed the change in property prices by county and date of sale from the PPR. This source is entirely comprehensive, but only covers sales, so we can't figure out demand from this, except by looking at price. Additionally, the address data is pretty raw, and it would take a whole lot of time or cost a whole bunch of money 6 to make it more usable.

If that could be done, the CSO have a lot of good area data which I barely scratch the surface of here. The most obvious next avenue of approach is through population statistics and change rates, and this would be expected to impact demand for housing.

You could then normalise the data by the population and get more useful estimates.

However, another issue which we're ingoring is rents, as a lot of property is not available to be sold. This is becoming increasingly common, especially in Dublin. I believe that a bunch of the really large transactions in the PPR are the result of such arrangements. We can get rents from various websites, in compliance with all legal processes :)

Finally, we need to measure the delta between asking and sale prices. Again, this can be obtained through various web-scraping means and sources. This delta will then allow us to make somewhat (more) useful decisions with respect to property 7

So, that's what I'm going to do, I guess. To be fair, this post is probably more like 2-3 articles, but I can't handle not being finished anymore, so I'm calling it done.

Footnotes:

i.e. by hand

but i did in fact read it. Reading any notes provided is a super-power.

I don't think anyone except me worries about this

we all partied, dontcha know?

I am still not sure if this was just accidental, or malicious

I checked with a reasonably cheap provider, and it would be 800 quid for the whole sample

though presumably even if I built a working method, it would stop working as more people did it